Introduction

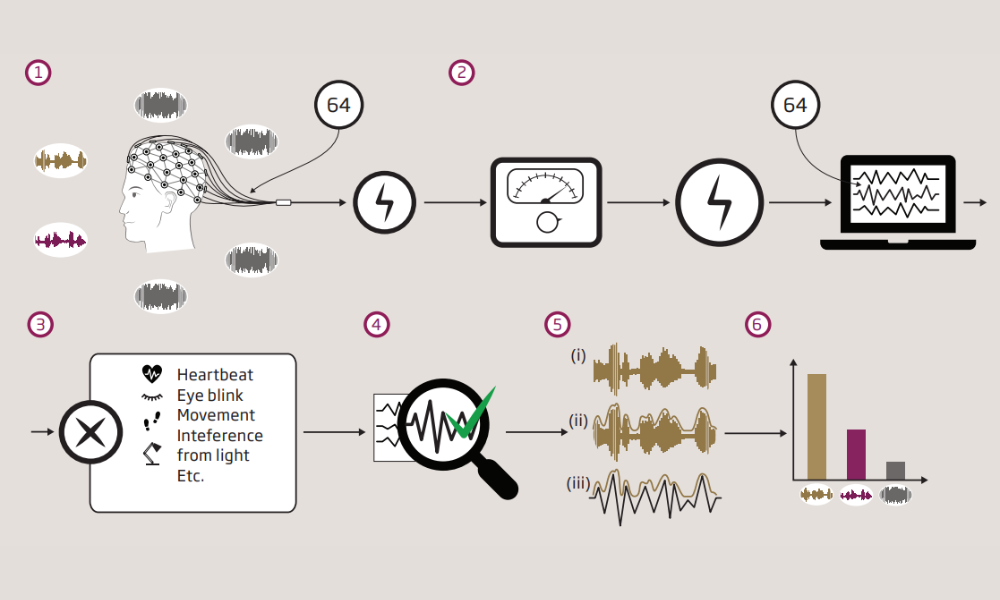

Electroencephalogram (EEG) is a non-invasive brain technique that objectively measures electrical activity in the brain using a set of electrodes placed on the scalp. The goal of this project is to develop a set of optimized methods to continuously monitor recorded EEG measurements from the brain to estimate and track sound processing in the brain.

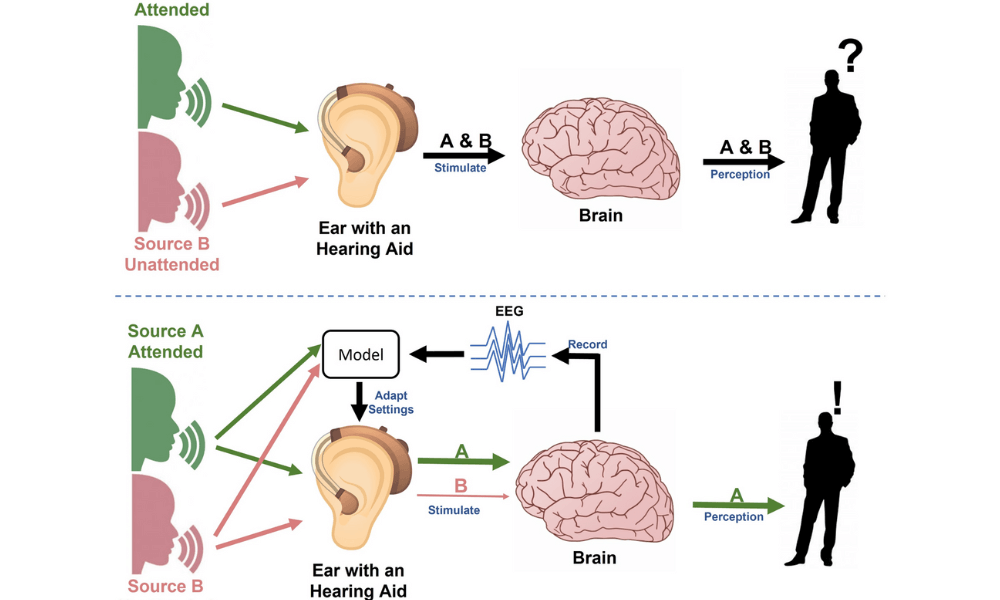

The increasing requirements on audio products for hearing devices, e.g., hearing aids (HAs), together with recent invention of EEG electrodes that fit in the ear will call for robust methods with high time and spatial resolution. In this project, we intend to address the problem of complex listening environments (e.g., the cocktail party problem) and we will provide a better understanding for how the sound is processed at different stages in the brain for both normal-hearing (NH) and hearing-impaired (HI) listeners, opening a new avenue for future advancements in HA solutions.

Aims

This project aims at expanding our knowledge on how competing speech is processed in the brain. Currently, EEG studies have mainly considered sensor-level analysis for relating brain responses to competing speech streams originating from different talkers in a listening environment. It is anticipated that localization of brain sources can provide a broader and clearer picture on how different competing speech streams are processed at various hierarchical levels in the brain.

PhD Project Linköping: The focus is on developing accurate models of the system from sound stimuli to locations of brain sources using EEG measurements.

PhD Project Lund: The focus is on development of adaptive and reliable high-resolution time-frequency characterizations of EEG for increased source localization in the brain.

These two PhD projects are overlapping in the sense that their outcomes and studies can be combined.

Methodology

We collected data from several groups of listeners with different hearing abilities. All participants were instructed to attend to one of multiple competing talkers embedded in different levels of background noise.

We develop more-advanced methods for EEG sensor-level and source-level analysis to study how low-level speech features (e.g., acoustic envelope and pitch) and high-level speech features (e.g., phonemes and words) modulate brain responses.

Results

This project is on-going. Some of our preliminary findings are:

- Source localization is a highly challenging task, but potentially feasible with EEG.

- The estimated coherence differences between speech envelopes and EEG can be used to track auditory attention.